Tools with Friendly Learning Curve: ddev

Just start it: ddev for local Drupal development

Some years ago, a frontend developer colleague mentioned that we should introduce SASS, as it requires almost no preparation to start using it. Then as we progress, we could use more and more of it. He proved to be right. A couple of months ago, our CTO, Amitai made a similar move. He suggested to use ddev as part of rebuilding our starter kit for a Drupal 8 project. I had the same feeling, even though I did not know all the details about the tool. But it felt right introducing it and it was quickly evident that it would be beneficial.

Here’s the story of our affair with it.

For You

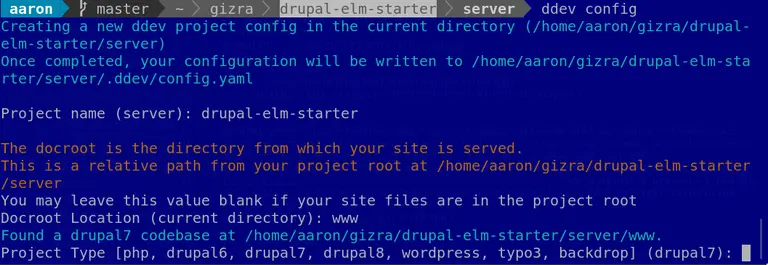

After the installation, a friendly command-line wizard (ddev config) asks you a few questions:

It gives you an almost a perfect configuration, and in the .ddev directory, you can overview the YAML files. In .ddev/config.yaml, pay attention to router_http_port and router_https_port, these ports should be free, but the default port numbers are almost certainly occupied by local Nginx or Apache on your development system already.

After the configuration, ddev start creates the Docker containers you need, nicely pre-configured according to the selection. Even if your site was installed previously, you’ll be faced with the installation process when you try to access the URL as the database inside the container is empty, so you can install there (again) by hand.

You have a site inside ddev, congratulations!

For All of Your Coworkers

So now ddev serves the full stack under your site, but is it ready for teamwork? Not yet.

You probably have your own automation that bootstraps the local development environment (site installation, specific configurations, theme compilation, just to name a few), now it’s time to integrate that into ddev.

The config.yaml provides various directives to hook into the key processes.

A basic Drupal 8 example in our case looks like this:

hooks:

pre-start:

- exec-host: "composer install"

post-start:

# Install Drupal after start

- exec: "drush site-install custom_profile -y --db-url=mysql://db:db@db/db --account-pass=admin --existing-config"

- exec: "composer global require drupal/coder:^8.3.1"

- exec: "composer global require dealerdirect/phpcodesniffer-composer-installer"

post-import-db:

# Sanitize email addresses

- exec: "drush sqlq \"UPDATE users_field_data SET mail = concat(mail, '.test') WHERE uid > 0\""

# Enable the environment indicator module

- exec: "drush en -y environment_indicator"

# Clear the cache, revert the config

- exec: "drush cr"

- exec: "drush cim -y"

- exec: "drush entup -y"

- exec: "drush cr"

# Index content

- exec: "drush search-api:clear"

- exec: "drush search-api:index"

After the container is up and running, you might like to automate the installation. In some projects, that’s just the dependencies and the site installation, but sometimes you need additional steps, like theme compilation.

In a development team, you will probably have a dev, stage and a live environment that you would like to routinely sync to local to debug and more. In this case, there are integrations with

hosting providers, so all you need to do is a ddev pull and a short configuration in .ddev/import.yaml:

provider: pantheon

site: client-project

environment: test

After the files and database are in sync, everything in post-import-db will be applied, so we can drop the existing scripts we had for this purpose.

We still prefer to have a shell script wrapper in front of ddev, so we have even more freedom to tweak the things and keep it automated. Most notably, ./install does

a regular ddev start, which results in a fresh installation, but ./install -p saves the time of a full install if you would like to get a copy on a Pantheon environment.

For the Automated Testing

Now that the team is happy with the new tool, they might be faced with some issues, but for us it wasn’t a blocker. The next step is to make sure that the CI also uses the same environment. Before doing that, you should think about whether it’s more important to try to match the production environment or to make Travis really easily debuggable. If you execute realistic, browser-based tests, you might want to go with the first option and leave ddev out of the testing flow; but for us, it was a desirable to spin an identical site on local to what’s inside Travis. And unlike our old custom Docker image, the maintenance of the image is solved.

Here’s our shell script that spins up a Drupal site in Travis:

#!/usr/bin/env bash

set -e

# Load helper functionality.

source ci-scripts/helper_functions.sh

# -------------------------------------------------- #

# Installing ddev dependencies.

# -------------------------------------------------- #

print_message "Install Docker Compose."

sudo rm /usr/local/bin/docker-compose

curl -s -L "https://github.com/docker/compose/releases/download/1.22.0/docker-compose-$(uname -s)-$(uname -m)" > docker-compose

chmod +x docker-compose

sudo mv docker-compose /usr/local/bin

print_message "Upgrade Docker."

sudo apt -q update -y

sudo apt -q install --only-upgrade docker-ce -y

# -------------------------------------------------- #

# Installing ddev.

# -------------------------------------------------- #

print_message "Install ddev."

curl -s -L https://raw.githubusercontent.com/drud/ddev/master/scripts/install_ddev.sh | bash

# -------------------------------------------------- #

# Configuring ddev.

# -------------------------------------------------- #

print_message "Configuring ddev."

mkdir ~/.ddev

cp "$ROOT_DIR/ci-scripts/global_config.yaml" ~/.ddev/

# -------------------------------------------------- #

# Installing Profile.

# -------------------------------------------------- #

print_message "Install Drupal."

ddev auth-pantheon "$PANTHEON_KEY"

cd "$ROOT_DIR"/drupal || exit 1

if [[ -n "$TEST_WEBDRIVERIO" ]];

then

# As we pull the DB always for WDIO, here we make sure we do not do a fresh

# install on Travis.

cp "$ROOT_DIR"/ci-scripts/ddev.config.travis.yaml "$ROOT_DIR"/drupal/.ddev/config.travis.yaml

# Configures the ddev pull with Pantheon environment data.

cp "$ROOT_DIR"/ci-scripts/ddev_import.yaml "$ROOT_DIR"/drupal/.ddev/import.yaml

fi

ddev start

check_last_command

if [[ -n "$TEST_WEBDRIVERIO" ]];

then

ddev pull -y

fi

check_last_command

As you see, we even rely on the hosting provider integration, but of course that’s optional. All you need to do after setting up the dependencies and the configuration is to ddev start, then you can launch the tests of any kind.

All the custom bash functions above are adapted from https://github.com/Gizra/drupal-elm-starter/blob/master/ci-scripts/helper_functions.sh, and

we are in the process of having an ironed out starter kit from Drupal 8, needless to say, with ddev.

One key step is to make ddev non-interactive, see global_config.yaml that the script copies:

APIVersion: v1.7.1

omit_containers: []

instrumentation_opt_in: false

last_used_version: v1.7.1

So it does not ask about data collection opt-in, as it would break the non-interactive Travis session. If you are interested in using the ddev pull as well, use encrypted environment variables

to pass the machine token securely to Travis.

The Icing on the Cake

ddev has a welcoming developer community. We got a quick and meaningful reaction to our first issue, and by the time of writing this blog post, we have an already merged PR to make ddev play nicely with Drupal-based webservices out of the box. Contributing to this project is definitely rewarding – there are 48 contributors and it’s growing.

The Scene of the Local Development Environments

Why ddev? Why not the most popular choice, Lando or Drupal VM? For us, the main reasons were the Pantheon integration and the pace of development. It definitely has the momentum. In 2018, it was the 13th choice for local development environment amongst Drupal developers; in 2019, it’s at the 9th place according to the 2019 Drupal Local Development survey. This is what you sense when you try to contribute: the open and the active state of the project. What’s for sure, based on the survey, is that nowadays the Docker-based environments are the most popular. And with a frontend that hides all the pain of working with pure Docker/docker-compose commands, it’s clear why. Try it (again), these days - you can really forget the hassle and enjoy the benefits!

Áron Novák